- AI/ML

Disaggregated Inference, Part 1: When & Where to Route

- Media & Entertainment

A New Live Streaming Origin Built for Global Scale

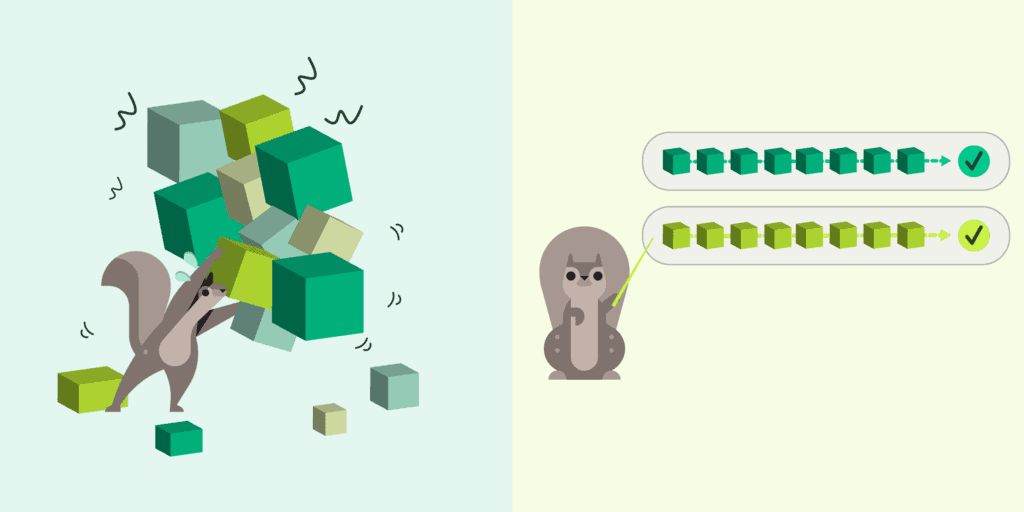

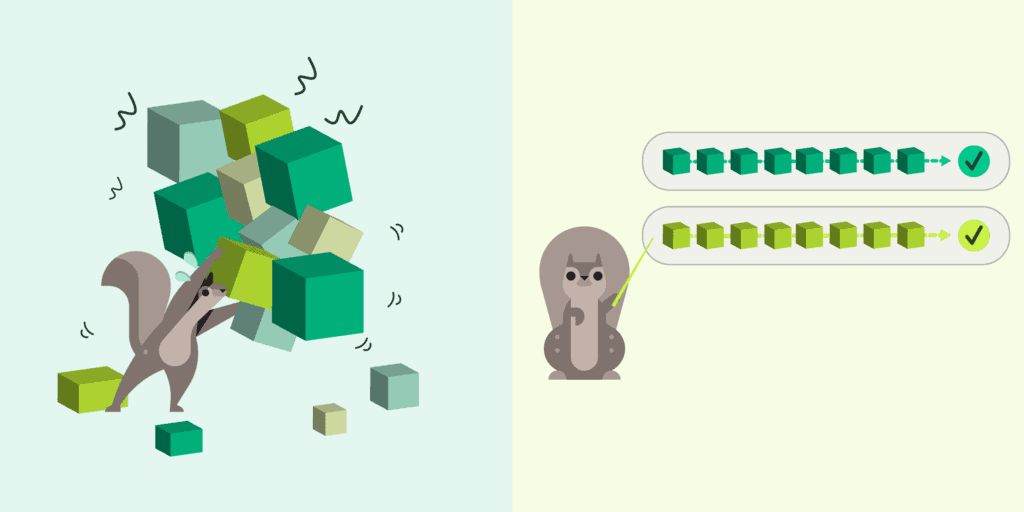

LLM inference is becoming a distributed systems problem. Explore the architecture patterns reshaping AI infrastructure ->