Imagine this: you’ve worked hard on your big idea—the MVP that’s going to launch your startup, the new service that’s going to solve all your customers’ pain points, the little fix that’s going to make all your users so happy.

And then imagine that it works. You turned your idea into a unicorn. You launched your new top offering. You suddenly turned thousands of loyal users into loud advocates. Congratulations! Party time!

And now imagine it all collapsing because you got too big, too fast—or because one of the backend components had planned maintenance during your peak. Bummer.

If you really believe in your ideas—and you take even a minute to look around at the world we live in today—you need to build for scale from the start. And the cool thing is that you actually can. Gone are the days of true scale only being possible for the giants who can maintain and staff their own on-prem fleet. With the ubiquity of the cloud and serverless, it’s possible to be scale-ready before you even go to prod. There are just four key concepts to keep front and center as you build.

1. Elasticity: Capacity on demand

Elasticity is the ability of infrastructure to automatically adjust its resources based on real-time demand. Even if you’re not operating at internet scale, traffic patterns are usually spiky. Scale-ready systems should be prepared to dynamically scale out or (more importantly) scale in resources according to what’s needed. This brings up an important point: the rate of elasticity matters. For those spiky workloads, elasticity must be instant. If you have to wait 10 minutes for the autoscaling you need, the event might already be over.

Serverless services like Amazon DynamoDB and all of Momento’s offerings enable elasticity by leveraging multi-tenancy to keep capacity on hand and always ready for allocation, making it possible to maintain optimal performance—not to mention cost-efficiency!

It’s kind of like if you’re gearing up to bake cinnamon rolls for an unpredictable number of guests. That’s daunting if you’ve only got one oven—but it’s no problem if you’ve got the keys to the commercial bakery next door with hundreds of preheated ovens ready for you when you need them.

2. Availability: Up and running

Availability is the degree to which a system remains operational and accessible at all times. This means availability and elasticity are related: if you don’t have the previously mentioned instant elasticity to deal with a traffic spike, your system can’t cope—and availability suffers. Scale-ready architectures are designed with redundancy, failover mechanisms, and load balancing to reduce service interruptions—like what can happen when a shard in your caching fleet gets overloaded with hot keys. And that’s just on the unplanned downtime side of things.

Planned downtime also impacts availability, which is why electing to use serverless services without maintenance windows is a smart move when thinking about scale. You don’t want an ill-timed update or patch to get in the way of your system’s success.

Think of what would happen if you’re baking up a storm and your pantry suddenly becomes locked. No matter how many preheated ovens you’ve got, you can’t bake if you don’t have the ingredients.

3. Observability: Knowing what’s happening

Observability is the ability to understand and analyze the internal state of a system through outputs like logs, metrics, traces, and insights. As systems grow in complexity and scale, this vision into a system’s performance, reliability, and health becomes increasingly crucial—and increasingly challenging, especially if observability isn’t an early design consideration.

This proactive approach is essential for maintaining optimal performance and it is a powerful enabler of elasticity and availability. It means the difference between promptly addressing an app bottleneck and picking up the pieces of a broken app experience—or correctly distinguishing an anomaly from standard behavior. By embracing observability, organizations can make informed decisions, optimize resource utilization, and enhance the overall resilience of their systems as they evolve and scale.

To use our baking analogy again, if you can’t check and see how the bake is going, the cinnamon rolls may end up totally raw—or burned to a crisp!

4. Speed: Keeping latencies low

And now for the least surprising one of all. The future is here. Users expect lightning-fast experiences—and that expectation only grows with the scale of your applications and services.

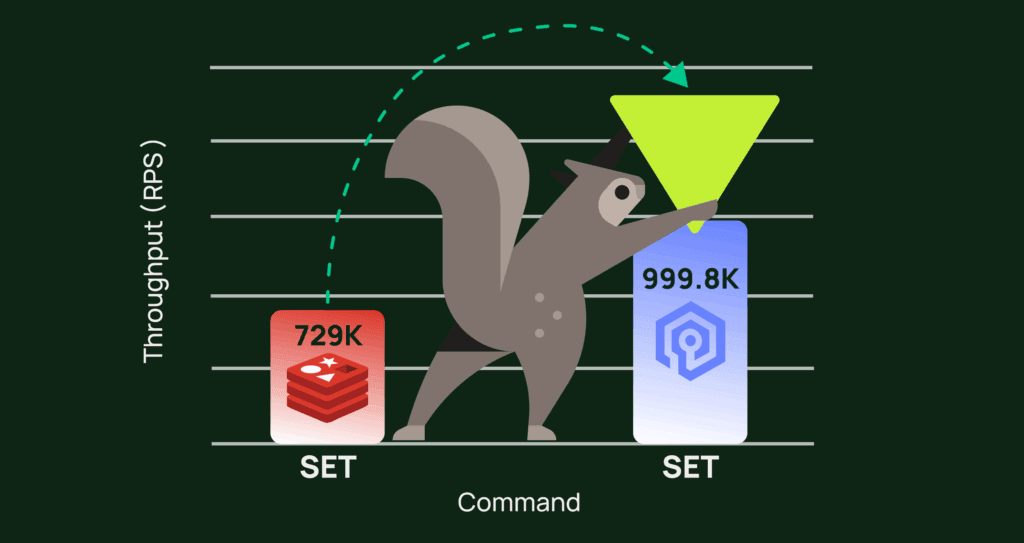

The key to speed lies in optimizing every aspect of the system, from code efficiency to network performance. Microservices can go a long way here, allowing for more granular optimization opportunities that really add up at scale. Caching is another powerful lever you can pull to reduce latency—by storing things in memory, you save a ton of time compared to network calls to the database (wherever that may be).

Back to those cinnamon rolls. You’ve got the ovens, you’ve got a cupboard well-stocked with fresh ingredients—but that cupboard is all the way across the kitchen! Think of how much time you could save yourself (and your hungry guests) if you kept the components closer.

Conclusion

If you build with elasticity, availability, observability, and speed in mind, you’ll set yourself up for success no matter how big you get. And we’re living in an era where it’s possible for anyone to uphold these tenets thanks to the power of serverless.

Now get out there and bake!

What did I miss? Are there other ingredients I should add? Tell us what you think over on X!

Jack Christie

Jack Christie