The following is a summary and analysis of presentations given at Unlocked Conference. The views expressed by the speakers are their own and do not necessarily reflect the editorial position of their companies.

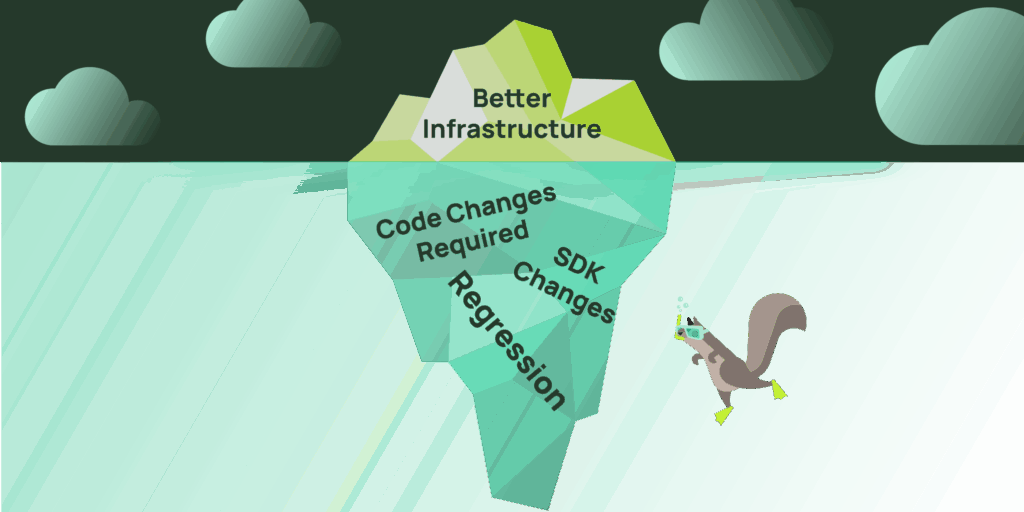

Scaling advice is easy to collect yet surprisingly difficult to apply.

A team shares how they solved a problem at massive scale. The architecture looks sound. The graphs look convincing. You try to apply the same ideas and nothing improves. In some instances, things actually get worse.

Those issues were on full display at the Unlocked Conference on January 22nd. Over the course of the day, engineers from Uber, Apple, and Mercado Libre walked through real production challenges and the changes that followed. All three operate enormous systems. All three rely heavily on caching. All three have invested years into platform infrastructure.

And yet the problems that they faced were very different.

Pressure shows up before limits

You could see it in all three talks. At small scale, systems tend to fail in obvious ways. CPU runs hot. Memory fills up. Latency climbs in a straight line. But at the scale these teams were dealing with, pressure builds across different metrics, based on the specific workflows, long before those limits are reached.

Traffic shape, client behavior, payload size, and data movement all play a role in how the pressure builds. As a result, issues arise from interaction effects rather than resource exhaustion. Uber, Apple, and Mercado Libre each described a system that appeared healthy by traditional metrics until something tipped it over.

The tricky part is that pressure looks different depending on what you built. Connection storms, event loop blocking, and bandwidth saturation are all forms of the same problem, but they require completely different solutions.

Uber and the client request connection storm

Uber’s session, led by Yang Yang and Shawn Wang focused on lessons learned from operating a Valkey-based caching platform that handles an enormous volume of requests across thousands of clusters. The infrastructure is large, but the number of services using it is enormous. Thousands of stateless service instances, each maintaining pools of connections to cache nodes, all acting independently.

The challenges started with retries.

A brief slowdown or transient error would result in clients aggressively reconnecting to potentially thousands of instances at once. Connections were being torn down and recreated faster than the system could absorb them. Proxies struggled to keep up with connection setup. Existing connections slowed. Timeouts increased. Retries multiplied. Healthy nodes were overwhelmed without ever becoming compute bound.

From the outside, it looked like a server-side problem. Inside the system, it was a coordination problem.

To stabilize the system, client libraries were updated to behave more fairly, circuit breakers were introduced, retry behavior was tightened, and Site Reliability Engineers (SREs) began monitoring connection creation metrics.

The story Uber shared at Unlocked was a reminder that at this scale, stability depends as much on client behavior as it does on backend capacity.

Apple and the hidden cost of large payloads

Apple’s talk, given by Yiwen Zhang, approached scale from a different angle. The system was fast. Throughput was high. Operations per second looked reasonable. And yet latency spikes kept appearing under specific workloads. In this situation it was caused by something completely different: payload size.

Valkey processes work through a single main event loop. As payloads grow, the cost of handling each request grows with them. Copying large values, building replies, and moving memory around consumes time even when the command itself is simple.

In production, moderate increases in payload size led to dramatic jumps in latency. PING times climbed to over a second during bursts without any obvious increase in traffic volume.

What made this difficult to diagnose was visibility. Bytes per second and operations per second looked stable. The distribution of payload sizes had changed, but existing metrics could not show it.

The improvements in Valkey 8.0 and Valkey 9.0 discussed at Unlocked focused on improving the read flow and write flow. Copy avoidance reduced unnecessary memory work. I/O threading moved expensive operations off the main thread.

For the audience at Unlocked, the lesson was that payload size reshapes the cost of every operation, even when everything else looks unchanged. Adding additional observability to payload size would help to identify these issues earlier, before they could become problems.

Mercado Libre and the cost of moving bytes

Mercado Libre’s session, presented by Ignacio Alvarez, told the story of building a cache platform for thousands of internal services across a rapidly growing organization. Early designs emphasized isolation where each application was standalone. The result was predictable behavior but poor efficiency.

As the platform grew, inefficiency became unavoidable. Shared infrastructure reduced waste but introduced new risks. Multi-tenant clusters meant that one workload could affect many others. When a shared cluster failed, it created what Nacho called “a big headache” as multiple critical services could interfere with each other. The blast radius of a single failure had expanded dramatically.

The Mercado Libre platform had 8,000 caches running across 30,000 Valkey nodes, with infrastructure growing 30-60% year over year. Incidents in this environment were caused by something unexpected: bandwidth.

Nodes faced challenges of bytes moving in and out. Large values, inefficient access patterns, and ungoverned internal team usage combined to push systems past safe operating conditions. The effect compounded as more workloads were spun up on the platform, sharing the same infrastructure.

The response centered on control. Abstractions enforced consistent client behavior. Governance limited blast radius. Tools like opt-in compression codec gave teams ways to reduce payload sizes. Rightsizing, and workload classification reduced unnecessary data movement.

The story Mercado Libre shared at Unlocked showed how a platform matures when efficiency is treated as a core property rather than a tuning exercise.

Different systems reveal different truths of scale

These talks shared a stage at Unlocked, but very little else. One centered on retries and coordination. One on payload size and event loop behavior. One on bandwidth and governance.

What tied them together was a common pattern. Each organization ran into a constraint specific to its own system design. Uber exposed coordination risk at the client layer. Apple exposed the cost of large payloads on a single-threaded loop. Mercado Libre exposed the compounding effects of data movement in shared infrastructure.

That is why scaling stories are so easy to misunderstand. The lesson is rarely the architecture itself. The lesson is the pressure that forced the change.

At large scale, systems do not fail where diagrams suggest they should. They fail where assumptions quietly accumulate.

These three talks were just a fraction of what we covered at Unlocked San Jose in January. Unlocked Seattle happens this May, and we’re building the agenda now. Get updates about the next conference or join us to share your own scaling story. The best conversations happen when practitioners compare notes. See you there!