Your coding assistant stutters mid-function. GPU utilization reads 92%. Adding capacity doesn’t help.

One GPU is trying to do two fundamentally different jobs. Prefill (reading the prompt) saturates tensor cores. Decode (generating tokens one at a time) is memory-bound and leaves those cores mostly idle. Put them on the same card, and every arriving prompt stalls the decoders mid-stream. Users call this token jitter. Your dashboard calls it success.

The usual answer is to disaggregate: prefill on one pool of GPUs, decode on another, KV cache shipped between them. Most posts stop there. The parts that matter come after: when it’s worth the trouble, how requests find the right GPU, how the cache gets there without stalling the decode, and why your networking stack may be about to let you down.

This post (Part 1 of 3) tackles the first two: when disaggregation is worth the trouble, and how requests find the right GPU. Part 2 covers the cache handoff; Part 3 covers the data plane.

When Is Disaggregation Actually Worth the Trouble?

The crossover depends on model size and parallel topology. DistServe reports that a 13B model on a single A100 becomes prefill-compute-bound once the input crosses ~512 tokens. Splitwise shows that on BLOOM-176B, a 1,500-token prompt takes as long to prefill as decoding just six output tokens. A typical RAG or agentic request is already mostly a prefill workload. Sarathi-Serve papers over this at moderate scale by chunking prefill and interleaving it with decode on the same GPU. Push past that and the chunks themselves jitter decode badly enough that physical disaggregation wins.

LLM inference has two latency metrics that matter: Time to First Token (TTFT) and Time Per Output Token (TPOT). Chunked-prefill monolithic serving works when you can tolerate TPOT jitter as long as the first token arrives fast. But real-time voice, coding autocomplete, and conversational agents have strict TPOT budgets. Users notice 200ms gaps between tokens immediately. For strict-TPOT cases, disaggregation eliminates the interference at the source by physically isolating prefill bursts from decode streams.

Before you commit, calculate the transfer tax. For Llama-70B at FP16, the KV cache works out to roughly 320 KB per token. A 10,000-token prompt produces a 3.2 GB KV cache that has to move before generation starts. Over 100 Gbps, that’s ~250ms added to TTFT. Disaggregation only wins if that 250ms beats the tail latency you’re already eating from interference. For long-context RAG it almost certainly does.

The ability to scale prefill and decode independently pushes the same direction for chat-style workloads. Peak traffic is lopsided: a busy hour might need substantially more decode capacity for long streaming responses, with barely any new prefill thanks to short follow-ups and heavy prefix reuse. A monolithic cluster has to scale both phases together; a disaggregated cluster scales them independently. Microsoft’s Splitwise paper reports 1.4× more throughput at 20% lower hardware cost versus symmetric monolithic, or 2.35× throughput under the same power budget for decode-heavy workloads. That’s real money at cluster scale.

How Requests Find the Right GPU

Once prefill and decode live on separate pools, every request needs two scheduling decisions: which prefill node handles the prompt, and which decode node takes over. Doing this well requires more machinery than monolithic serving ever needed.

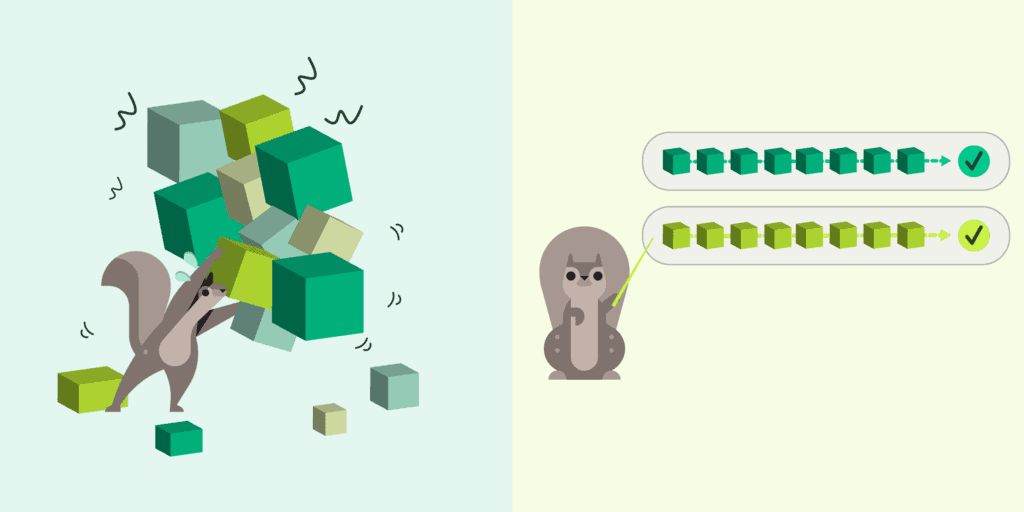

The standard design is a two-level hierarchy, articulated most clearly in Splitwise. A cluster-level scheduler routes requests across the prefill and decode pools, while a machine-level scheduler on each GPU handles continuous batching and memory paging for the requests it already owns. The split exists because the two decisions operate on different time scales: global routing tolerates tens of milliseconds of thinking, but local batching runs at microsecond granularity and can’t afford a network round-trip. Unifying them produces a scheduler that’s either too slow to batch well or too parochial to route well.

The interesting part is how the cluster-level scheduler picks a node. Naive routing (round-robin or least-loaded) ignores what each node already holds in HBM. In multi-turn conversations, system-prompt-heavy workflows, and few-shot templated agents, most tokens in a new prompt are tokens some node has already processed. A cache-aware router recognizes this and sends the request to whichever node holds the prefix. Recomputation disappears, and TTFT collapses.

NVIDIA’s Dynamo router implements this with a small cost function:

Cost = (Overlap Score Weight × Prefill Blocks) + Decode Blocks

- prefill blocks term counts how many tokens still need fresh computation on a given worker, divided by block size. If that worker already caches 90% of the prompt, its prefill blocks count approaches zero and its score plummets. The decode blocks term is a load signal (how much active generation the worker is already doing), which keeps the router from slamming a single cache-hot node. The overlap score weight is the tunable knob: high values prioritize cache locality (and TTFT), low values spread load evenly (stabilizing inter-token latency at the cost of occasional cache misses). A coding assistant with strict TTFT runs it high; a batch summarization pipeline with tolerant TTFT runs it low.

This routing matters more than it sounds. Production deployments report KV cache hit rates up to 87.4% with cache-aware routing, versus effectively zero for naive schemes. That’s an order-of-magnitude reduction in how much GPU math the cluster has to do.

Cache-aware routing isn’t the only design that works. DistServe takes a different bet: keep the runtime router simple (FCFS, shortest-queue for prefill, least-loaded for decode) and push all the intelligence into a placement algorithm that runs before deployment. The algorithm takes your workload distribution, SLOs, and cluster topology, and outputs the optimal parallelism strategy and instance counts via simulation, because actual SLO attainment depends on workload distributions too messy to model in closed form. For OPT-175B on ShareGPT-style traffic, it landed on a parallelism configuration the paper notes would be hard to find by hand. It’s also network-aware: with limited cross-node bandwidth, DistServe forces same-stage prefill and decode segments to colocate on one node so the KV transfer rides NVLink instead of slow Ethernet. The right routing strategy isn’t universal: it’s a function of prompt variance, prefix reuse, and interconnect quality.

Routing decides which GPUs handle a request, but it doesn’t say how the KV cache actually moves between them. The handoff itself is its own problem: when to start sending, where the cache lives between hops, and how to reuse it across requests. Those are orchestration questions, and they’re where most of the practical performance lives.

Next in Part 2: Moving the KV Cache Without Stalling the Decode.

Hien Luu

Hien Luu